RoboCare

An Alexa-Enabled Human-Robot Interface for Safely Caring for Covid-19 Patients. With K. Shah and M. Mehtaz

Project By: Tanmay Agarwal, Kathan Shah and Muntaqim Mehtaz

Supervisor: Dr. Junaed Sattar

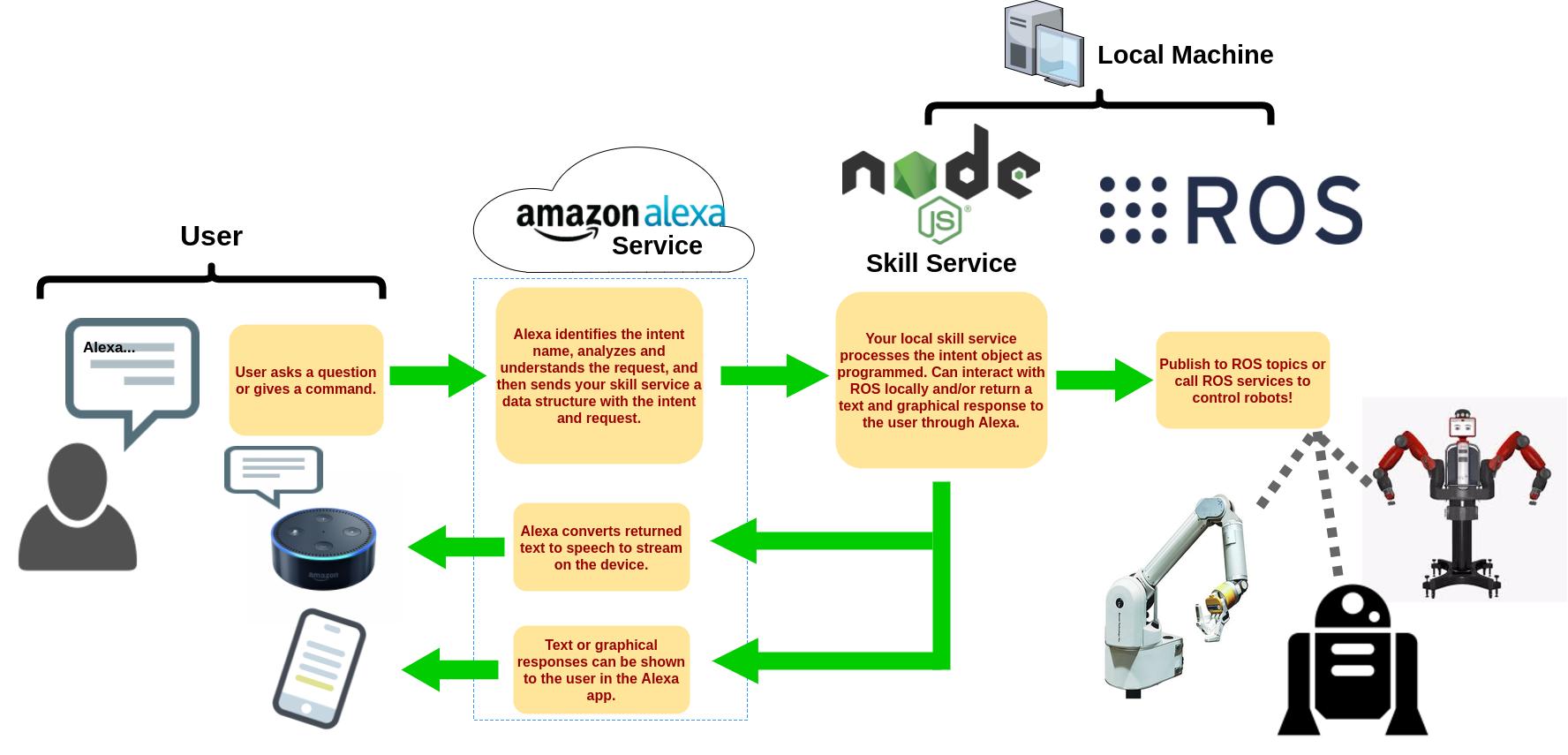

This project simulates a TiaGo humanoid robot as a "Home Caretaking Robot". This allows a user to use an alexa in their apartment to command a linked robot anywhere in the world. Built during the coronavirus pandemic as a proof-of-concept to care for contagious patients in a safe manner for hospital staff.

Users can communicate with the robot through two modes:

- Hand Gestures - Each room in the simulation is given a number. To tell the robot to go to room 1, hold up one finger; two fingers for room two and so forth

- Voice Commands - The robot can communicate over Alexa. Simply say "Alexa, tell RoboCare to go to room X".

Find code and instructions on this Github Repository

Overview of the project's infrastructure interacting between the robotics stack, cloud stack for Alexa, and computer vision stack for hand gesture detection.

You can also put regular text between your rows of images.

Say you wanted to write a little bit about your project before you posted the rest of the images.

You describe how you toiled, sweated, *bled* for your project, and then... you reveal it's glory in the next row of images.

Left: Simulation Environment setup with different rooms. Right: The TiaGo Humanoid Robot completing a task inside the simulator.